We all rave and talk about TDD (Test Driven Development) all the time. Have you asked yourself these questions?

· “Do my unit tests truly cover blocks (or lines) of code that I’ve implemented?”

· “How do I ensure that specific features (implementation) is doing what it’s supposed to do?”

· “Is there a possibility that a block or line of code that I’ve written is not being touched by my unit tests?”

This post however, I’m not going through the practices and understanding of how unit testing works. There are many resources and literature available for you to look at (just google TDD J). Most of you already know how to do this but I do want to share my experience and practices around unit testing “WITH” code coverage. Have you used code coverage before? If not, let’s start with that.

So, what is code coverage? Simply put: “it is a measure (%) used to describe the degree to which the source code of a program is executed when a particular test suite runs”

Source: https://en.wikipedia.org/wiki/Code_coverage

We also describe that a program/application with high degree of code coverage, has a lower chance of containing undetected software defects compared to a program/application with low code coverage, again depending on the test suite. It’s easy to produce tons of tests that should cover the code, but we normally measure it. Covering code just means you need quality tests that verifies the functionality of blocked or line code that you wrote (the quantity isn’t that important). Which boils down to, you are not writing “Regression Tests” (validating edge cases and/or test case families) when writing unit tests to measure code coverage. However, you may have requirements to implement certain rules that may touch edge case scenarios. In this case, you write unit tests for those because it’s now a logic/function that you will implement.

Finding the sweet spot! When is “Enough” enough? It’s when you can make changes to your code with confidence that you’re not breaking anything and to me what it means is that you have tested a block of code that has logic and/or implementation in place. As a .Net developer, I come to wonder, how about properties? Specially auto generated properties? Do we account for code coverage numbers for those? My answer to this is No. Auto generated properties or properties in general and by default doesn’t have logic in place. Hence, why write unit tests for something that doesn’t have logic?, Then why measure it through code coverage?

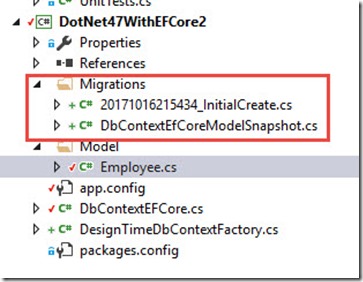

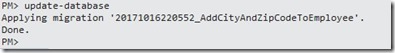

Here, I’ll start off with a project that has a unit test available and some level of functionality. Consider the following unit test.

Consider the following unit test code block:

[TestMethod]

public void ValidateGetRequest()

{

var uri = new Uri("https://api.github.com/users/mikelo/repos");

var jsonresponse = new HttpConnectionService().GetResponse(uri);

Assert.IsTrue(jsonresponse.Contains("38358544"));

}

This unit test validates getting a JSon response from a web api. In this case, a valid public API from Github. For simplicity, I simply want to do an HTTP GET from this web api to get repo’s (Git repositories) from a Github contributor.

Below is the implementation

public string GetResponse(Uri url)

{

string jsonresponse;

using (var client = new HttpClient())

{

client.DefaultRequestHeaders.Accept.Add(new MediaTypeWithQualityHeaderValue("application/json"));

client.DefaultRequestHeaders.Add("User-Agent", "client agent");

var response = client.GetAsync(url).Result;

jsonresponse = response.Content.ReadAsStringAsync().Result;

}

return jsonresponse;

}

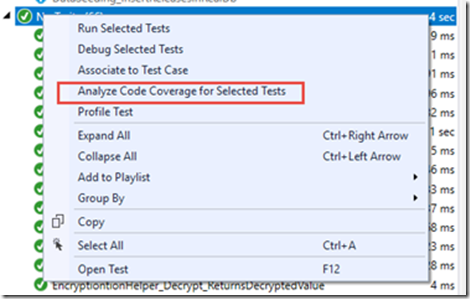

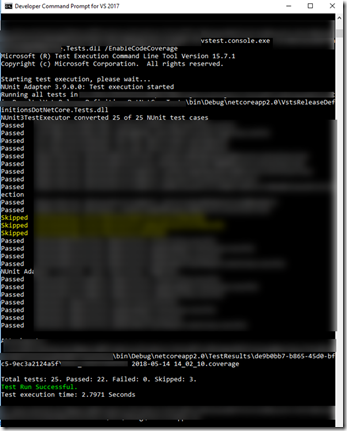

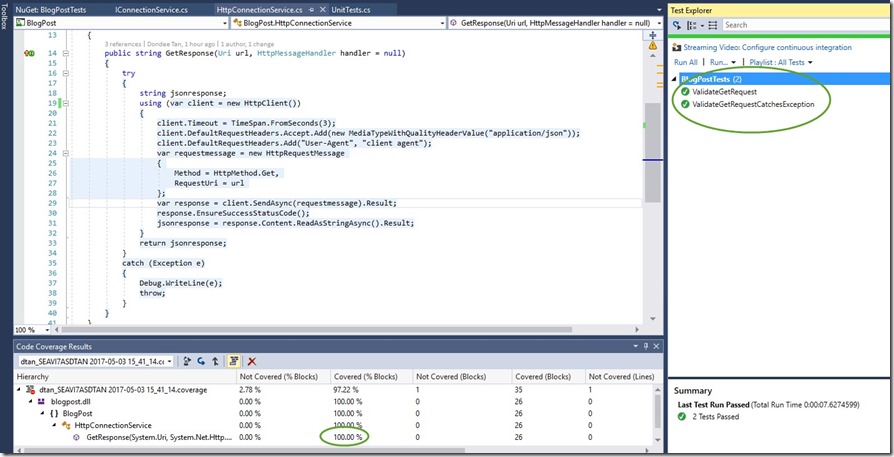

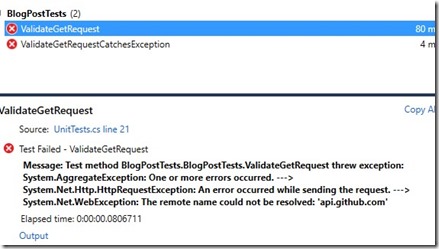

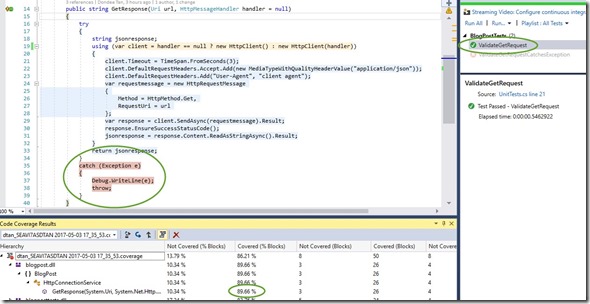

Running the tests yields the following results:

So far so good, we’ve achieved writing a unit test to validate a response from a public web api as well as ensured that the test we wrote covered 100% of the code implementation.

A problem arises from this. It’s apparent that we have a major dependency in our unit test. Now and days we use and rely on build systems (such as Jenkins and VSTS) to compile, tests and publish artifacts. The practice behind Unit Testing is to ensure that all unit tests executed are de-coupled and not have any dependencies in place.

Besides dependencies, I’m sure by now you’ve realized that there’s no guarantee that every single call you make will be successful so now comes the next cycle of our work, let’s expand the implementation to include a try catch block so at some point we can customize what we want to return or throw back to the user in an event a problem occurs

We start by creating a new test to validate that any exceptions thrown are caught in the catch block. Again, TDD circle of:

We wrote the Unit Test, failed it and now need to pass it. How do we pass a unit test where we expect an exception and still make it pass? In MSTESTV2, we can apply a method attribute to a test method that expects a type of exception. Once used, this tells the test method that the test “SHOULD” pass when an exception is caught of an exception type. Here’s the newly created unit test:

[TestMethod]

[ExpectedException(typeof(HttpRequestException))]

public void ValidateGetRequestCatchesException()

{

var uri = new Uri("https://apiXXXX.github.com/users/mikelo/repos");

var jsonresponse = new HttpConnectionService().GetResponse(uri);

}

Notice that the URL was changed to point to a non-existent URL. We also refactored implementation on the code by adding a try – catch block and with this line: response.EnsureSuccessStatusCode();

public string GetResponse(Uri url)

{

try

{

string jsonresponse;

using (var client = new HttpClient())

{

client.DefaultRequestHeaders.Accept.Add(new MediaTypeWithQualityHeaderValue("application/json"));

client.DefaultRequestHeaders.Add("User-Agent", "client agent");

var response = client.GetAsync(url).Result;

response.EnsureSuccessStatusCode();

jsonresponse = response.Content.ReadAsStringAsync().Result;

}

return jsonresponse;

}

catch (Exception e)

{

Debug.WriteLine(e);

throw;

}

}

Using this method: EnsureSuccessStatusCode() ensures that exceptions are thrown if the IsSuccessStatusCode property for the HTTP response is false. This is my own implementation and I’m sure there are many ways to catch and throw errors/exceptions back to users.

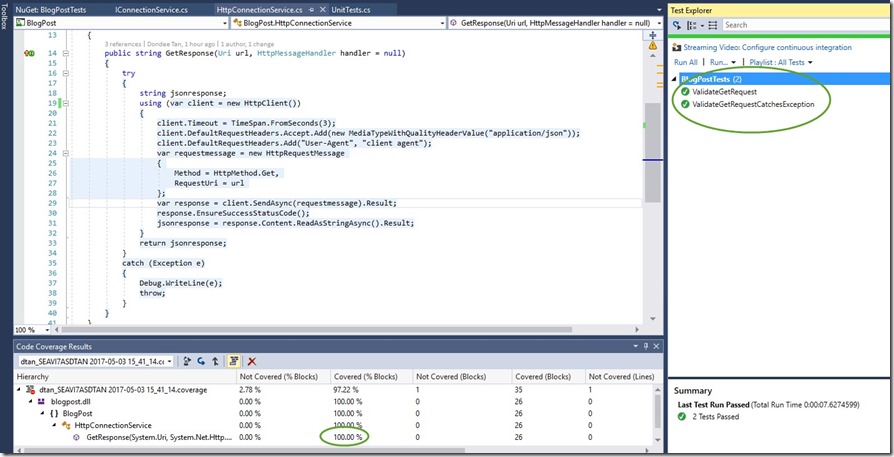

So far so good, I have 2 Unit Tests that:

· Passes

· Verifies implementation of the code I wrote is being covered through Code Coverage – 100%

(Note: The blue highlighted section of the screenshot)

While we’ve satisfied basic principles of TDD + Code Coverage thus far, we’re still left with the point of isolation. Current TDD practice suggests that any unit test when executed should be isolated and no real dependencies should be called upon.

Our Unit Tests still rely on a working API endpoint. This is problematic as we know unit tests are autonomous.

Solution? Mocking/Faking endpoints! The best part I love about TDD is that inevitably your code leads to better design through Dependency Injection and/or Inversion of Control.

Before I go further, it might be worthwhile to talk about Mocking (Faking) and Dependency Injection. I’ll briefly show you how dependency injection works later.

What is Mocking? Mocking is primarily used in unit testing. It is used to isolate behavior of an object or function of what you want to test by simulating the behavior of the actual object and/or function.

There are so many articles, guides and practices around mocking. There are 2 well known mocking frameworks that are used by many developers

1) MOQ – My favorite. Easy to use, open source with many contributors. MOQ is hosted in GitHub and works well in .Net. https://github.com/Moq/moq4/wiki/Quickstart

2) RhinoMocks – Same concept as MOQ. https://hibernatingrhinos.com/oss/rhino-mocks

What is Dependency Injection? If you’ve been practicing TDD, I’m quite certain your code will eventually lead to dependency injection.

Snippet from Wikipedia:

https://en.wikipedia.org/wiki/Dependency_injection

“Dependency injection is a technique whereby one object supplies the dependencies of another object. A dependency is an object that can be used (a service). An injection is the passing of a dependency to a dependent object (a client) that would use it. The service is made part of the client’s state, passing the service to the client, rather than allowing a client to build or find the service, is the fundamental requirement of the pattern.”

For .Net developers: Dependency Injection implements Interfaces then used in class constructors as parameters.

Let’s go back to our code and will consider dependency injection later.

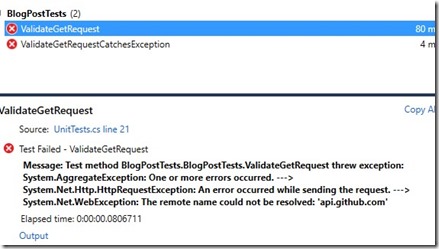

Unit Tests –CHECK. Code Coverage – CHECK. As I write this post, I’m in route to San Francisco. What a great way to work on TDD and ensure I start mocking at this point. Given that I don’t have any persistent connection to the public API my tests will fail

Luckily, the implementation I decided to use for API request is HTTPClient (built in .Net) which allows me to pass in an “HttpMessageHandler” which therefore I can mock the data.

With a little bit of refactor work: (Again, Red -> Green -> Refactor analogy), Here’s the modified version of the HttpConnectionService class

public string GetResponse(Uri url, HttpMessageHandler handler = null)

{

try

{

string jsonresponse;

using (var client = handler == null ? new HttpClient() : new HttpClient(handler))

{

client.Timeout = TimeSpan.FromSeconds(3);

client.DefaultRequestHeaders.Accept.Add(new MediaTypeWithQualityHeaderValue("application/json"));

client.DefaultRequestHeaders.Add("User-Agent", "client agent");

var requestmessage = new HttpRequestMessage

{

Method = HttpMethod.Get,

RequestUri = url

};

var response = client.SendAsync(requestmessage).Result;

response.EnsureSuccessStatusCode();

jsonresponse = response.Content.ReadAsStringAsync().Result;

}

return jsonresponse;

}

catch (Exception e)

{

Debug.WriteLine(e);

throw;

}

}

Here’s a modified version of the Unit Tests:

[TestMethod]

public void ValidateGetRequest()

{

//No Need to specify a valid URI. I'm mocking the "state" or behavior at this point.

//var uri = new Uri("https://api.github.com/users/mikelo/repos");

var mockhandler = new Mock<HttpMessageHandler>();

mockhandler.Protected()

.Setup<Task<HttpResponseMessage>>("SendAsync", ItExpr.IsAny<HttpRequestMessage>(), ItExpr.IsAny<CancellationToken>())

.Returns(Task<HttpResponseMessage>.Factory.StartNew(() => new HttpResponseMessage

{

StatusCode = HttpStatusCode.OK,

Content = new StringContent("Mock Response. OK", Encoding.UTF8, "application/json")

}));

var jsonresponse = new HttpConnectionService().GetResponse(new Uri("http://someuri"), mockhandler.Object);

Assert.IsTrue(jsonresponse.Contains("Mock Response. OK"));

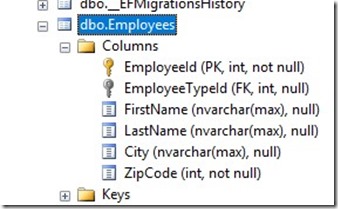

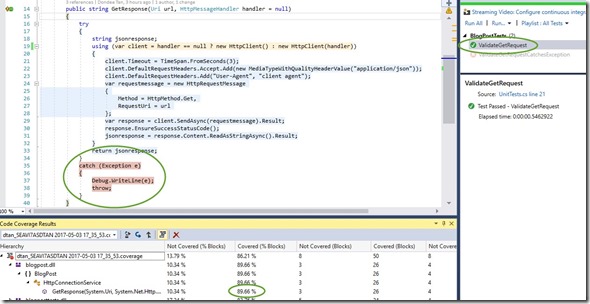

Passing the first test yields the following code coverage result:

I passed the first unit test because I was able mock the request however this test doesn’t cover all the code blocks. Code Coverage is at 89.6%. Great progress so far! Note that code coverage % is subjective to the total code blocks developed. Importantly in this picture, the specific Unit Test didn’t go through the exception block. Let’s fix the other tests to validate exceptions are caught. Below is the modified version of the Unit Test to validate exception handling

[TestMethod]

[ExpectedException(typeof(HttpRequestException))]

public void ValidateGetRequestCatchesException()

{

var mockhandler = new Mock<HttpMessageHandler>();

mockhandler.Protected()

.Setup<Task<HttpResponseMessage>>("SendAsync", ItExpr.IsAny<HttpRequestMessage>(),

ItExpr.IsAny<CancellationToken>())

.Throws<HttpRequestException>();

var jsonresponse = new HttpConnectionService().GetResponse(new Uri("http://someuri"), mockhandler.Object);

}

Both test passes! Code Coverage went up to: 96.55%! Lastly, both tests reached relevant code blocks in implementation.

Why wasn’t I able to achieve 100% code coverage even though the Unit Tests covered the path to all code blocks? It seems that if use certain built .net features (highlighted in yellow) it treats it as an ambiguous state. I would only assume at this point that it’s how code coverage works in Visual Studio and/or the libraries for Code Coverage. In this case, MSTest.

At this point, it’s debatable that 100% code coverage should be met all the time. In the last refactor work, we didn’t meet 100% code coverage but it’s acceptable in this case. I would probably bet that as you continue to practice TDD (now with code coverage in mind J), you will have an acceptable range of code coverage %. Meaning, not all the time you will attain 100% code coverage

The real point here is that we’ve met the criteria for TDD by ensuring unit tests written validates any change or refactor work. This is what TDD + Code Coverage does. With Code Coverage, you ensure that any change or refactor work you do, has valid unit tests which is shown through code coverage numbers

A modified version of TDD circle would show:

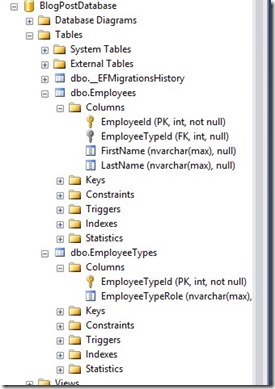

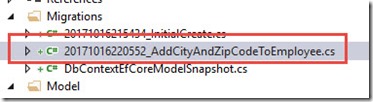

Now let’s expand the code a bit by introducing DI (Dependency Injection) I’ll add an abstraction layer (Façade) so that the user doesn’t call the connection service directly rather call this façade class for working with data. This is a common practice so the façade layer can work with any other business logic or requirement. Here’s the new class implementation:

public class GithubApiService

{

private readonly IConnectionService _connectionService;

public GithubApiService(IConnectionService connectionService)

{

_connectionService = connectionService;

}

public string GetReposFromGitHub(Uri uri, HttpMessageHandler handler = null)

{

var response = _connectionService.GetResponse(uri, handler);

return $"From GitHubApiService Class. Response Value: {response}";

}

}

I used DI to pass the IConnectionService in the constructor. When I do this:

1) I tell the object (constructor parameter) that during instantiation, I pass in an interface of IConnectionService type.

2) I can then mock the data directly in the façade layer instead of the ConnectionService layer.

As you might guess, here’s the Unit Test for validating the new façade class.

[TestMethod]

public void ValidateGithubApiService_GetReposFromGitHub()

{

var mockhandler = new Mock<IConnectionService>();

mockhandler.Setup(service => service.GetResponse(It.IsAny<Uri>(),null)).Returns("Mock Response. OK");

var githubapiservice = new GithubApiService(mockhandler.Object);

var response = githubapiservice.GetReposFromGitHub(new Uri("http://someuri"));

Assert.IsTrue(response.Contains("From GitHubApiService Class. Response Value: Mock Response. OK"));

}

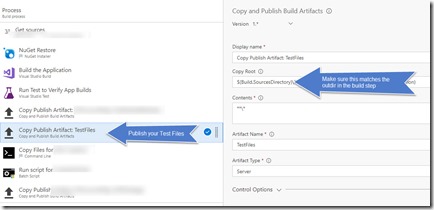

Final Screenshot!

Here’s an actual usage of the façade layer without mocking data:

[TestMethod]

public void ValidateGithubApiService_GetReposFromGitHubWIthConnectionService()

{

var githubapiservice = new GithubApiService(new HttpConnectionService());

var response = githubapiservice.GetReposFromGitHub(new Uri("https://api.github.com/users/mikelo/repos"));

Assert.IsTrue(response.Contains("38358544"));

}

There’s a lot more information on Dependency Injection. This post is not intended to deep dive on DI or Mocking rather ensure that when we practice TDD, we should also account for Code Coverage as measure of quality.